Edited by Brian Birnbaum and an update of my original AMD deep dive and my Q2 update.

1.0 AMD´s GPUs are Ready to Compete

Recent evidence suggests AMD´s GPUs are already competitive in the marketplace. Management´s remarks during the recent Q3 conference call corroborate.

Almost ten years into my journey as an AMD shareholder, I continue to be more than pleased with the company´s evolution; my return since first investing in 2014 is 2,700%. Still, I believe the company to be severely undervalued at present. In Q3 we began to see AMD´s new product roadmap gain traction and position the company for continued non-linear growth over the next decade.

AI is quickly evolving into the world´s new computing platform. AMD is primed to take full advantage, repositioning as an AI-first organization. In my AMD deep dive, I explain why the company has a structural advantage over its peers and is indeed set to thrive as AI goes mainstream.

AMD has mastered chiplets over the last decade, which:

Boast much higher yields and therefore cost less than monolithic chips.

Match the computational power and efficiency of monolithic chips.

AMD´s rise to prominence over the last decade is the result of leveraging chiplets to disrupt Intel in the CPU space. As I explain in the deep dive, it is now employing the same strategy to disrupt Nvidia´s dominance of the GPU space.

GPUs train and make inferences (i.e. predictions) with AI models. As AI evolves over the coming decades, the GPU market will grow exponentially–and AMD with it.

If AMD’s new GPUs are competitive, not only will the company benefit from increased Datacenter sales, but also its ability to infuse each business segment with AI capabilities, driving growth on the top line and bottom lines, along with improved margins.

On the Q3 conference call, management claims to have made “significant progress” in the Datacenter GPU business, with “significant customer traction” for the next generation MI300 chip. Additionally–and in line with previous guidance–Lisa Su said on the call that AMD Datacenter GPU revenue will be:

$400M in Q4 2023, implying a 50% QoQ growth of the Datacenter business.

Over $2B in FY2024.

$2B in FY2024 is a fraction of what Nvidia expects to sell during the same period. However, it’s a solid first step in AMD´s journey toward gaining GPU market share.

Abhi Venigalla, MosaicML, offers a very interesting source of alternative data. Some months ago he shared research proving how easy it is to train an LLM (large language model) using AMD Instinct GPUs via Pytorch. He claims that, since the release of his work, community adoption of AMD GPUs has “exploded”.

[…] we further expanded our AI software ecosystem and made great progress enhancing the performance and features of our ROCm software in the quarter.

- Lisa Su, AMD CEO during the Q3 2023 conference call.

From Abhi´s new research, a few things stand out:

Training the same LLM on the same piece of hardware is 1.13X faster on ROCm 5.7 than on ROCm 5.4. I already knew AMD had a fast optimization pace on the hardware side, but this indicates that the company is beginning to operate similarly on the software side.

Note: ROCm is the equivalent to Nvidia´s CUDA.

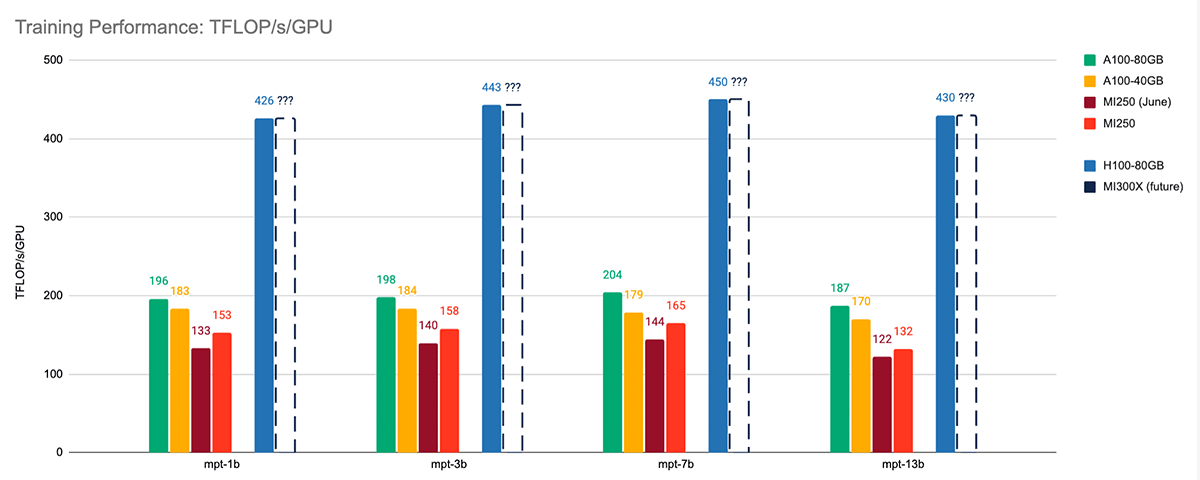

Comparing AMD´s MI250 against the same generation Nvidia A100, the two computing units perform similarly when training the same LLM. When comparing the former with the H100-80G, which has much larger memory, the latter performs much better. You can visualize the performance deltas in the graph below.

In a post from back in May I explain why LLMs require hardware architecture that dis-aggregates memory from compute. Essentially, LLMs are large, and, in order to make rapid inferences, you need the LLM in question nearby the actual computing engine–in fact, it needs to fit in the memory on-chip. Incidentally, to train an LLM you also need to make inferences with it.

A chip with little memory will not be able to host an LLM on-chip and will actually require the model to be hosted across a number of chips. This disproportionately increases latency (time taken for information to move between memory and compute), which slows down inference and, ultimately, decreases performance.

The fundamental difference between Nvidia´s A100-40GB and its A100-80GB is that the latter has more memory. The respective bandwidths are 1.555GBs and 2.039GBs. Therefore, the A100-80GB´s communication between the compute engine and the memory faster, thus making inference faster, and so forth.

In the graph above, the performance delta between the A100-40GB and the A100-80GB reveals that doubling the memory more than doubles the teraflops per second per GPU during the training process.

The memory of AMD´s new MI300 chipset-based GPU is 128GB. Given how much better the performance of the A100-80GB is compared to the A100-40GB, I suspect that the increased memory of the MI300 alone will make the chip competitive.

Abhi´s research certainly matches with Lisa Su´s comments during the Q3 conference call:

[…] validation of our MI300A and MI300X accelerators continue progressing to plan with performance now meeting or exceeding our expectations.

Naturally, this positions the two companies in a rat race. I believe the longer term will reveal the advantage in yields that chiplets confer. Q4 will be pivotal for AMD, as its MI300 GPU begins to ship.

2.0 PCs are Back

The Client segment has been a concern, albeit one shared by the whole of the semi sector. Nonetheless, it’s bounced back, just in time for AMD to revamp the division´s product lineup to position AI front and center via the XDNA architecture.

In Q2, Client segment revenue–composed, among other products, of PCs, GPUs, and APUs–was down 54% YoY. Per AMD CFO Jean Hu, the PC market was experiencing “one of the worst down cycles [of] the last three decades.” In Q3, Client revenue increased 42% YoY and 46% sequentially to $1.5B.

Management believes the PC market is normalizing in line with seasonal patterns. Management guided for PCs to recover by H2 FY2024 back when the downcycle began in mid-2022. Such a prediction reinforces the notion that management wields a world-class understanding of the business and industry writ large.

On the Q2 call, rather than worry about the Client unity, management focused the GPU initiative. While I celebrated their focus on AI at the time, admittedly, I slowly began to worry that neglecting the Client segment would make it vulnerable to re-disruption from Intel.

However, during the Q3 call Lisa Su made clear that Ryzen AI was a top priority, noting that sales of Ryzen 7000 processors, featuring the Ryzen AI on-chip accelerator, “grew significantly.”

“[…] there are now more than 50 notebook designs powered by Ryzen AI in [the] market, and we are working closely with Microsoft on the next generation of Windows that will take advantage of our on-chip AI Engine to enable the biggest advances in the Windows user experience in more than 20 years.

- Lisa Su, AMD CEO during the Q3 2023 conference call.

Said accelerator is based on AMD´s XDNA adaptive AI architecture. Thanks to the Xilinx acquisition, XDNA essentially changes the shape of the accelerator in question depending on what neural network is being run, in order to increase inference efficiency. I explain this concept in depth in my deep dive and why it is so revolutionary. I explained this at a time in which the market could not put a finger on the $50B Xilinx acquisition..

In essence, an amenable architecture at a marginal cost to computation requirements of each neural network leads to a higher performance at a lower cost versus static chips. By acquiring the undisputed leader in FPGAs (Xilinx), which powers XDNA, AMD has added the aforementioned competitive advantage to its stack of structural competitive advantages.

In turn, AMD can insert FPGAs into any CPU engine and application, making XDNA highly scalable and broadly deployable, from notebooks to server CPUs. As I explain in the deep dive, CPUs will not take over training workloads anytime soon. But, in combination with FPGAs, they can take on plenty of inference workloads.

Thus, Ryzen AI cements the relevance of AMD´s Client division as consumers come to expect from their PCs more and more AI functionality. This phenomenon will extend itself to the Datacenter and game consoles. Eventually–as I explain in the deep dive–any and all kinds of computing devices will be expected to be able to make inferences without costly GPUs.

When asked whether she was concerned about ARM-based chips, this was Lisa´s response:

So look, the way we think about ARM, ARM is a partner in many respects so we use ARM throughout parts of our portfolio. I think as it relates to PCs, x86 is still the majority of the volume in PCs. And if you think about sort of the ecosystem around x86 and Windows, I think it's been a very robust ecosystem.

What I'm most excited about in PCs is actually the AI PC.

Absent from Q1 and Q2 2023 earnings releases is evidence of any increase in the R&D spend in the Client segment. Meanwhile, in Q3 2023, Datacenter operating income was $306M, or just 19% of revenue, versus $505M or 31% of revenue in Q3 2022. The delta in operating income as a percentage of revenue is due to increased R&D spend, to “support future AI revenue growth and product mix.”

Moving forward, it’s worth noting that AMD seems to be concentrating its AI research efforts in the Datacenter division. It is therefore likely that AMD is developing XDNA alongside the chiplet-based GPUs within the Datacenter operation.

In such a scenario, it is therefore not that PC AI efforts will feed into other divisions, but rather that the core tech being developed within the Datacenter division is transferable to the other segments.

3.0 AMD´s New Competitive Advantage

As hypothesized in the deep dive, AMD has finally confirmed that the product roadmap includes combining computing units. This may prove a meaningful competitive advantage.

In my deep dive, I explain how AMD´s Infinity Fabric is a key enabler of the company´s product roadmap for the next decade. As the most granular interconnect technology in the industry, Infinity Fabric allows AMD to connect chiplets. While Nvidia´s NVLink is indeed similar, the company has only really used the tech to link big chips.

Infinity Fabric permits AMD more agility in terms of combining otherwise disparate computing units. The aforementioned Ryzen 7000 processors combined with Ryzen AI on-chip accelerators, powered by XDNA, exemplify the combinations noted in the deep dive. During the Q3 call’s Q&A section, an analyst asks whether AMD will be combining GPUs with FPGAs soon. Lisa Su replied:

[…] the way I think about sort of FPGAs in the Datacenter, it's another compute element. We do use FPGAs or there are FPGAs in a number of the systems. I would say from a revenue contribution standpoint, it's still relatively small sort of in the near term.

We have some design wins going forward that we would see that content grow but that won't be so much in 2024, that it will be beyond that.

Per Lisa´s reply, we now know that AMD will indeed combine GPUs with FPGAs down the line, validating my initial hypothesis. Over the coming quarters, I look forward to analyzing qualitative signals generated by AMD´s ability to combine units.

My view is that, over the coming years, AMD will be able to satisfy specific customer requirements that competitors simply cannot. This should be highly accretive to AMD´s market share and margins.

And part of our value proposition, I think, to our Datacenter partners is, look, whatever compute element you need, whether it's CPUs or GPUs or FPGAs or DPUs or -- we have the ability to sort of bring those components together.

- Lisa Su, AMD CEO during the Q3 2023 conference call.

When asked about the threat from Intel being able to potentially manufacture their own chips more cheaply, Lisa said the following:

Going forward, I think having the CPU, the GPU, the FPGAs, the DPUs, I think it gives us actually a nice portfolio to really optimize not just on a single component basis but on sort of all of the different workloads that you need in the Datacenter.

4.0 Gaming and Embedded Enter Corrections

The decline of the two divisions will weigh on AMD´s financial performance for some time.

Sequentially, Datacenter and Client revenue are up 21% and 46%, respectively, while Gaming and Embedded are down 5% and 15% respectively. Per management´s remarks, it seems this will continue over the next couple of quarters.

[…] we would say Embedded, think about it down similar levels sort of in the teens compared to sort of Q3 was down in the teens and Q4 will be down in the teens.

And then Gaming, from a console standpoint, we do expect that to be down a bit more than that.

- Jean Hu, AMD CFO during the Q3 2023 conference call.

Management claims the decline of the Gaming and Embedded segments are due to high inventory levels for the former and the end of the console cycle for the latter. I have no reason to suspect otherwise. I trust AMD management to navigate the respective cycles, as they have done with the Client segment.

5.0 Financials

AMD remains in great financial health; profitability is set to surge in FY2024.

Income Statement

Income Statement

On the surface it may appear that AMD’s diversification dilutes Datacenter and Client segment profitability. In reality, the four segments’ synergy sets the stage for AMD to thrive over the coming decade.

Looking ahead, the investment we are making in AI across our Data Center, Client, Gaming, and the Embedded segment enable us to offer one of the best industry’s broadest portfolio, targeting the most compelling opportunities and positioning us to drive long-term profitable growth.

- Jean Hu, AMD CFO during the Q3 2023 conference call.

As a result–and despite the aforementioned sequential progress of Datacenter and Client–total revenue is up only 4% YoY.

The Xilinx acquisition gave AMD its adaptive computing competitive advantage. Since then, AMD has been amortizing acquisition costs, taking a toll on GAAP financials. Nonetheless, we now see amortization costs declining steadily, with GAAP gross margin ticking up healthily.

On the other hand, non-GAAP gross margins are flat YoY, which means that the financials have not evolved much structurally. Although the product pipeline is looking excellent, these advancements haven’t yet trickled to the bottom line.

With YoY topline and margins flat, the only explanation for a sharp rise in GAAP earnings per share is a substantial decrease in amortization costs. On the other hand, non-GAAP earnings per share, which often eliminates short-term costs, are only up 4.4% YoY.

Nonetheless, gross profit seems to be reversing to the upside. If the new GPUs gain traction and the PC market remains stable, AMD will likely see a sharp rise in profitability towards H2 FY2024, when the Embedded and Gaming segments surge again.

Cash Flow Statement

Similarly to gross profit, cash from operations seems poised to surge in FY2024, as normal conditions return to the market. In Q3, cash from operations came in at $421M, up 11% sequentially but down a 56% YoY.

Free cash flow came in at $297M, with the trend very much tracking gross profit and cash from operations.

Balance Sheet

At the end of Q3, cash, cash equivalents, and short-term investment stood at a healthy $5.8 billion. In turn, long term debt came in at $1.7B and capital leases at $395M, affording AMD a very comfortable net cash position.

Inventory came down sequentially by $122M, with no immediate signs of overexpansion. AMD management has an excellent track record when it comes to managing inventory levels, as demonstrated in navigating the PC correction. I believe they will continue to do so as the Embedded and Gaming markets experience prolapse.

During the quarter, AMD bought back $511M in stock, repurchasing 4.8M shares. Total shares outstanding have come down from 1.62B in Q1 2022, to 1.616B in Q3 2023.

SBC (stock-based compensation) trended up during the same period. It’s fair to say that the continued stock buybacks are more than compensating for the inevitable dilution brought about by the Xilinx acquisition.

6.0 Conclusion

AMD is set to meaningfully improve its financials as it enters the AI market over the coming year, despite some continued headwinds in Embedded and Gaming.

The company is executing well on its roadmap, positioned better than ever for the future of computing. Over the coming two years, I look forward to seeing AMD demonstrate that it can mix and match leading computing engines at will.

Tailoring computation to the specific needs of customers will drive margins and make AMD a much more profitable company going forward.

Until next time!

⚡ If you enjoyed the post, please feel free to share with friends, drop a like and leave me a comment.

You can also reach me at:

Twitter: @alc2022

LinkedIn: antoniolinaresc